What is Hadoop, and how does it relate to cloud?

Hadoop can be installed in any cloud deployment service like AWS, Microsoft or Google. Hadoop cannot provide middle where services of managing applications, storage and software’s. But Cloud computing manages Hadoop and its related components like source systems, target database, and runtime environments etc.

What is CDH Cloudera?

Cloudera Data Science Workbench provides connectivity not only to CDH and HDP but also to the systems your data science teams rely on for analysis. Automated data and analytics pipelines. Cloudera Data Science Workbench lets data scientists manage their own analytics pipelines, including built-in scheduling, monitoring, and email alerting. ...

Is Cloudera open source?

The actual closed source product such as the Cloudera Manager and the Cloudera Data Science Workbench (CDSW) will be released as Open Source software after February 2020. Access to binaries will be limited to only customers with a current subscription agreement starting mid-november of this year.

How to start learning Hadoop for beginners?

For Newbie:

- You need to understand Linux Operating Systems. ...

- Learn any one programming language Java or Python. ...

- There are so many vendors like CDH, Hortonworks and MapR who provides sand box environment with pre-built Hadoop. ...

- Understand all Hadoop Eco-system components like: HDFS, MapReduce, PIG, Hive, HBase, Sqoop, Flume etc.

See more

What is use of Cloudera in Hadoop?

Cloudera was launched to help users deploy and manage Hadoop, bringing order and understanding to the data that serves as the lifeblood of any modern organization. Cloudera allows for a depth of data processing that goes beyond just data accumulation and storage.

Is Hadoop part of Cloudera?

Core Hadoop, including HDFS, MapReduce, and YARN, is part of the foundation of Cloudera's platform.

What is Cloudera in big data?

Cloudera's Enterprise Data Hub (EDH) is a modern big data platform powered by Apache Hadoop at the core. It provides a central scalable, flexible, secure environment for handling workloads from batch, interactive, to real-time analytics.

What is Hadoop used for?

Apache Hadoop is an open source framework that is used to efficiently store and process large datasets ranging in size from gigabytes to petabytes of data. Instead of using one large computer to store and process the data, Hadoop allows clustering multiple computers to analyze massive datasets in parallel more quickly.

What is difference between Hadoop and Cloudera?

Hortonworks' business growth strategy focuses on embedding Hadoop into existing data platforms, while Cloudera takes the approach of a traditional software provider that profits from product sales and competes with other commercial software providers.

Is Cloudera a database?

Cloudera delivers an operational database that serves traditional structured data alongside new unstructured data within a unified open-source platform.

Who is using Cloudera?

Companies using Cloudera Hortonworks for Database Management include: Walmart, a United States based Retail organisation with 2300000 employees and revenues of $572.75 billion, Ford Motor Company, a United States based Automotive organisation with 183000 employees and revenues of $136.00 billion, Express Scripts ...

How do I start Hadoop in Cloudera?

Step 1: Configure a Repository.Step 2: Install JDK.Step 3: Install Cloudera Manager Server.Step 4: Install Databases. Install and Configure MariaDB. Install and Configure MySQL. Install and Configure PostgreSQL. ... Step 5: Set up the Cloudera Manager Database.Step 6: Install CDH and Other Software.Step 7: Set Up a Cluster.

What is Cloudera platform?

Cloudera Data Platform (CDP) is a hybrid data platform designed for unmatched freedom to choose—any cloud, any analytics, any data. CDP delivers faster and easier data management and data analytics for data anywhere, with optimal performance, scalability, and security.

What is Hadoop example?

Examples of Hadoop Retailers use it to help analyze structured and unstructured data to better understand and serve their customers. In the asset-intensive energy industry Hadoop-powered analytics are used for predictive maintenance, with input from Internet of Things (IoT) devices feeding data into big data programs.

Is Hadoop a database?

Is Hadoop a Database? Hadoop is not a database, but rather an open-source software framework specifically built to handle large volumes of structured and semi-structured data.

What are three features of Hadoop?

Features of Hadoop Which Makes It PopularOpen Source: Hadoop is open-source, which means it is free to use. ... Highly Scalable Cluster: Hadoop is a highly scalable model. ... Fault Tolerance is Available: ... High Availability is Provided: ... Cost-Effective: ... Hadoop Provide Flexibility: ... Easy to Use: ... Hadoop uses Data Locality:More items...•

Is Cloudera Hadoop free?

The statement seems to be worded very carefully but what it really says is that to use Cloudera software in production you will need a paid subscription agreement. Only customers, trial users and developers can access the products.

What is difference between CDH and CDP?

CDH 6.3 is the last major version of CDH. CDP is the new distribution from Cloudera which effectively replaces CDH. CDP is designed to run on-premises (like CDH) but it is also a cloud-native technology that can be run in the public cloud. CDP is also designed to support hybrid and private cloud architectures.

How is Snowflake different from Cloudera?

Cloudera is characterized as Big Data Analytics, key Value Databases, and Big Data Integration Platform whereas Snowflake is characterized as Database Management Systems i.e. DBMS and Columnar Databases.

What is Apache spark vs Hadoop?

Apache Spark is designed as an interface for large-scale processing, while Apache Hadoop provides a broader software framework for the distributed storage and processing of big data. Both can be used either together or as standalone services.

How does Hadoop work?

Instead of using one large computer to store and process data, Hadoop uses clusters of multiple computers to analyze massive datasets in parallel. Hadoop can handle various forms of structured and unstructured data, which gives companies greater speed and flexibility for collecting, processing, and analyzing big data than can be achieved with relational databases and data warehouses.

What is Hadoop used for?

Analytics and big data. A wide variety of companies and organizations use Hadoop for research, production data processing, and analytics that require processing terabytes or petabytes of big data, storing diverse datasets, and data parallel processing.

What are the benefits of Hadoop?

In the Hadoop ecosystem, even if individual nodes experience high rates of failure when running jobs on a large cluster, data is replicated across a cluster so that it can be recovered easily should disk, node, or rack failures occur.

Why do you need Hadoop?

Apache Hadoop was born out of a need to more quickly and reliably process an avalanche of big data. Hadoop enables an entire ecosystem of open source software that data-driven companies are increasingly deploying to store and parse big data. Rather than rely on hardware to deliver critical high availability, Hadoop’s distributed nature is designed to detect and handle failures at the application layer, delivering a highly available service on top of a cluster of computers to reduce the risks of independent machine failures.

What is Apache Hadoop?

Apache Hadoop software is an open source framework that allows for the distributed storage and processing of large datasets across clusters of computers using simple programming models. Hadoop is designed to scale up from a single computer to thousands of clustered computers, with each machine offering local computation and storage. In this way, Hadoop can efficiently store and process large datasets ranging in size from gigabytes to petabytes of data.

How does Hadoop control costs?

Hadoop controls costs by storing data more affordably per terabyte than other platforms. Instead of thousands to tens of thousands of dollars per terabyte being spent on hardware, Hadoop delivers compute and storage on affordable standard commodity hardware for hundreds of dollars per terabyte.

What is Hadoop Common?

Hadoop Common: Hadoop Common includes the libraries and utilities used and shared by other Hadoop modules.

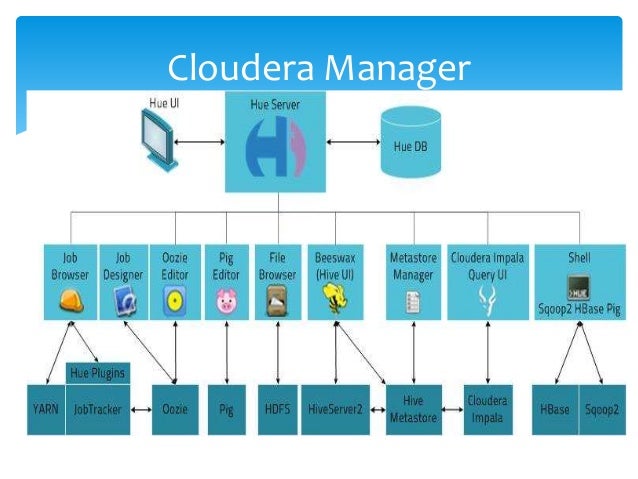

What is Cloudera Manager?

Cloudera Manager is the Hadoop administration tool that’s trusted by the professionals and powers the largest Hadoop deployments. With intelligent defaults and unique monitoring customizations, it drastically simplifies cluster operations. Designed with an extensible foundation, it quickly and seamlessly integrates with both third-party tools and the newest Hadoop components for unified, reliable operations.

What is Cloudera Director?

Cloudera Director is built for powering Hadoop across all the major cloud environments. It provides the flexibility to deploy on your environment of choice —using built-in integrations with Amazon Web Services and Google Cloud Platform, and an Open Plugin Framework to integrate with custom environments. With a single multi-cluster, multi-environment view, you can easily manage elasticity and dynamic cluster lifecycles across common workloads.

What is Hadoop software?

Hadoop is an open source project that seeks to develop software for reliable, scalable, distributed computing—the sort of distributed computing that would be required to enable big data. Hadoop is a series of related projects but at the core we have the following modules:

How does cloud play into this?

The cloud is ideally suited to provide the big data computation power required for the processing of these large parallel data sets. Cloud has the ability to provide the flexible and agile computing platform required for big data, as well as the ability to call on massive amounts of computing power (to be able to scale as needed), and would be an ideal platform for the on-demand analysis of structured and unstructured workloads.

What is the purpose of big data?

Analytics provides an approach to decision making through the application of statistics, programming and research to discern patterns and quantify performance. The goal is to make decisions based on data rather than intuition. Simply put, evidence-based or data-driven decisions are considered to be better ...

Is Hadoop unstoppable?

In a recent article for application development and delivery professionals (2014, Forrester ), Gualtieri and Yuhanna wrote that “Hadoop is unstoppable.” In their estimation it is growing “wildly and deeply into enterprises.”

Multi-tenant management and visibility

Open your cluster to many workloads and user groups, while still meeting priority SLAs through dynamic resource management and cluster utilization reporting. Easily allocate YARN and Impala resources across different tenants and automatically adjust based on time, day, and weighted priority.

Extensible integration

Get unified visibility into Cloudera Enterprise and the leading third-party tools, all through Cloudera Manager. Designed with an extensible framework, partner tools can seamlessly integrate with Cloudera Manager for truly centralized administration.

Trusted for production

Run your most critical workloads with ease at any scale with Cloudera Manager. As the only Hadoop administration tool with comprehensive rolling upgrades, you can always access the leading platform innovations without the downtime.

Built-in proactive and predictive support

Cloudera Manager gives you a direct connection to Cloudera’s world-class support. With a simple click of a button, you can send diagnostic bundles to Cloudera Support for the quickest time-to-resolution.

What is a Hadoop daemon?

A Hadoop daemon collects metrics in several contexts. For example, data nodes collect metrics for the “dfs”, “rpc” and “jvm” contexts. The daemons that collect different metrics in Hadoop (for Hadoop-1 and Hadoop-2) are listed below:

What is Metrics2 in Hadoop?

The Metrics2 system for Hadoop provides a gold mine of real-time and historical data that help monitor and debug problems associated with the Hadoop services and jobs. Ahmed Radwan is a software engineer at Cloudera, where he contributes to various platform tools and open-source projects.

Does Hadoop have metrics?

This blog post focuses on the features and use of the Metrics2 system for Hadoop, which allows multiple metrics output plugins to be used in parallel, supports dynamic reconfiguration of metrics plugins, provides metrics filtering, and allows all metrics to be exported via JMX.

Is Apache Hadoop a trademark?

Apache Hadoop and associated open source project names are trademarks of the Apache Software Foundation. For a complete list of trademarks, click here .

What is Hadoop software?

The Apache Hadoop software library is a framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. Rather than rely on hardware to deliver high-availability, the library itself is designed to detect and handle failures at the application layer, so delivering a highly-available service on top of a cluster of computers, each of which may be prone to failures.

What are the advantages of Cloudera?

here are some of the advantages of Cloudera Hadoop: 1. Cloudera provides a tool SCM that would kind of automatically set up a hadoop cluster for you. Cloudera bundles the hadoop related projects which is pretty ease to install on any standard linux boxes ()

Does Cloudera support CDH?

There are a good number of large enterprises using CDH with cloudera support. (Cloudera provides various support packages)

Big Data: More Than Just Analytics

What Is Hadoop?

- Hadoop is an open source project that seeks to develop software for reliable, scalable, distributed computing—the sort of distributed computing that would be required to enable big data. Hadoop is a series of related projects but at the core we have the following modules: • Hadoop Distributed File System (HDFS):This is a powerful distributed file s...

Big Data, Hadoop and The Cloud

- In a recent article for application development and delivery professionals (2014, Forrester), Gualtieri and Yuhanna wrote that “Hadoop is unstoppable.” In their estimation it is growing “wildly and deeply into enterprises.” In their article they go on to review several vendors including Amazon, Cloudera, IBM and others. They conclude IBM is a leader from a market presence, the s…

How Does Cloud Play Into this?

- The cloud is ideally suited to provide the big data computation power required for the processing of these large parallel data sets. Cloud has the ability to provide the flexible and agile computing platform required for big data, as well as the ability to call on massive amounts of computing power (to be able to scale as needed), and would be an ideal platform for the on-demand analysi…